These days you must have heard many audios as well as seen a lot of videos that are available in multiple languages. But the question is: How exactly do they translate an audio file from one language to another? The short answer to this is Audio Translation with Generative AI. But in this blog, I am going to guide you on how you can translate an audio file from one language to another. To achieve this we will be using two models, whisper and bark for generating the transcript and then generating speech from the transcript respectively.

Let’s get to know more about these models.

Open AI Whisper

Whisper is a Generative AI model launched by Open AI. It is an automatic speech recognition system that can recognise audio inputs and can generate the transcript. It is trained on 680,000 hours of multilingual and multitask data. By using this model we can generate transcripts in multiple languages and we can also translate them into English, i.e. we can generate a transcript in the English language from the audio that is in a different language.

The benefit of using Whisper is that it is an open-source AI model and can be used to serve as a foundation for building any useful application.

There are 5 models in Whisper – tiny, base, small, medium, and large. In our project we are going to use a medium model, you can use any of the above-mentioned models based on your system’s specifications and requirements.

Refer here to check your system requirements and appropriate model.

Suno-AI Bark

Bark is a text-to-audio Generative AI model created by Suno. It can generate highly realistic, multilingual speech as well as other audio through text. But here’s the cool part – it’s not just for talking. This Generative AI can also make other sounds like music, background noises, and even simple effects. It’s so smart that it can even express emotions without using words, like laughing, sighing and even crying.

Suno is letting other researchers and developers use Bark. They’re sharing the special settings of Bark so that anyone can use it to develop things like talking apps and cool audio effects, and even for business stuff.

In our project, we will be using a bark model to generate audio from the translated scripts so that the output voice does not sound robotic.

Now let’s see how we can translate an audio from one language to another.

Audio translation from one language to another with Generative AI

In this code, I will be taking an audio file in .wav format and will be translating it into another language. The following steps will be followed.

Step 1 – Taking the audio input

Step 2 – Generating the transcript of the audio file using whisper

Step 3 – Translating the transcript from one language to any language of choice.

Step 4 – Generating speech from translated transcript using bark.

So this was a quick workflow that we followed, let’s dive into the mechanism with hands-on coding.

Requirements

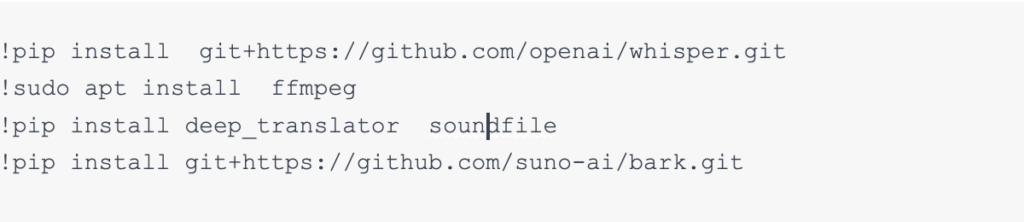

Before we start to code, make sure to install these libraries in your system. I would recommend using Google Colab.

Taking Audio Input

The first step is taking an audio input that you want to translate. For now, I am taking audio of a single speaker to get the translation output. For multiple speakers which is an advanced step, we need to add speaker diarization, for the basic steps we are going with the single speaker.

Note:- Make sure that the audio is in .wav format.

Now we have Uploaded our audio file successfully using the whisper model. I have trimmed the audio to 30 seconds to make sure that the model will not take much memory. For large audio files, you can transcribe it in batches and later on merge the transcript.

Generating the transcript of the audio file using whisper Generative AI

The next step is to generate the transcript from the audio we just uploaded. Make sure that you have changed your Colab runtime to T4 gpu.

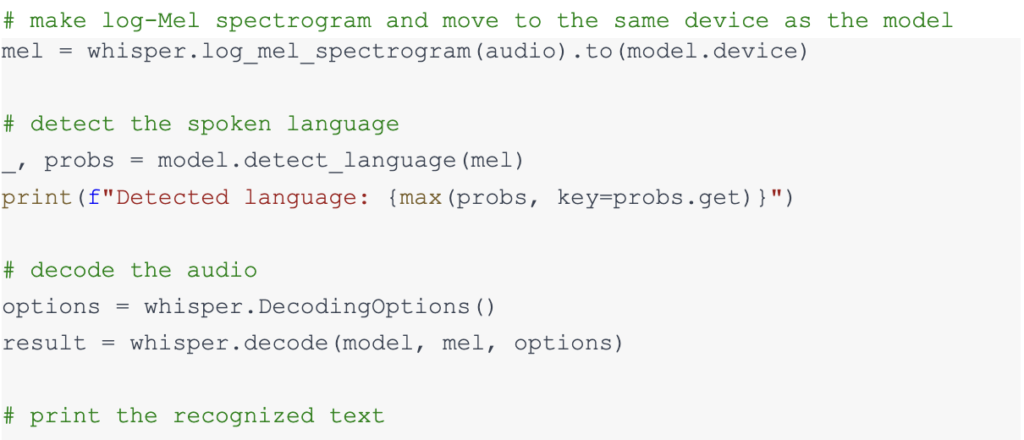

In the above code, we first created a log-mel spectrogram of the audio. A log Mel spectrogram is a representation of an audio signal’s loudness, or amplitude, as it varies over time at different frequencies. After that, we moved it to the device so that it could use GPU and we could get fast output, as most of the Generative AI models perform more efficiently on GPU.

We also added language detection to detect the audio language and finally decoded the audio and printed the output transcript of the audio file that we provided.

Translation

Now that we have our transcript we need to translate it to another language. For that, we will need the Google Translator library.

We have installed a deep translator library previously and now we will import GoogleTranslator from it. It can translate an input text from one language to another. To check the supported languages u can use the code below.

I will be translating my transcript from English to French.

Here we do not need to specify the input language, we will only choose the output language and provide the language code. So now our transcript is translated into French. We can also translate it to many more languages based on the code but make sure that the translated language is also supported by the Suno AI’s Bark model that we are using for speech-to-text.

Refer here to check supported languages

Generating speech from translated transcript using bark

We have a translated transcript and now we have to generate speech from the text. There are many speech-to-text models available but we will be using Suno AI’s Bark as this model’s output voice is far better than the other’s. It also contains expressions that will help us in generating a voice that does not sound robotic.

Limitations

There is a limitation with this model as it can only generate audio of 15 seconds in a go. To overcome this limitation we will firstly break our transcript into different sentences and do the generation of audio from each sentence and later on concatenate all audio together to generate a single audio file.

Process

To break transcripts into different sentences we will be using the nltk library. Make sure to install it.

Import the Suno bark model and other necessary libraries.

Load preloaded models using the below command

After loading the models let’s split our script into different sentences.

Great! If you print the sentences you can see that the transcript has been converted into a list consisting of multiple sentences, we will iterate through sentences in the list to generate the audio. Later on, we can merge all the audio to create a single audio.

Now that we have our transcript in French I will be using a French speaker prompt for a better speech. You can choose the speaker based on your transcript language.

Check for the speaker’s id from here.

This will take some time based on your transcript length. After this, we will get arrays consisting of all the audio data.

We will concatenate them using numpy.concatenate which is a function in the NumPy library, it is used to concatenate (join together) two or more arrays along an existing axis. This will give us the array which will contain all the information of the audio.

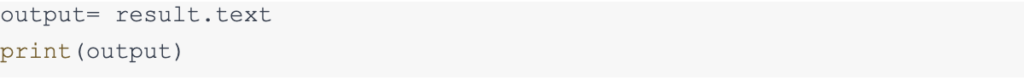

We will save that array in the form of a .wav file into our system. To save data we will use the soundfile library that will take 3 parameters as file name, audio data, and sample rate.

Finally, with these steps, we have successfully translated an audio file to a different language and saved it by the name output.wav.

Conclusion

In conclusion, this guide shows how to use Generative AI models, Whisper and Bark to translate audio. Whisper turns spoken words into text, and Bark transforms that text into realistic and expressive audio. The process involves uploading a .wav audio file, generating a transcript with Whisper, translating it with Google Translator, and using Bark to create spoken content in a different language. Following the steps and code provided makes it easy for anyone to translate and generate diverse audio content, bridging language gaps. These Artificial Intelligence models, Whisper and Bark, are open source and they offer a user-friendly solution for developers and researchers to create innovative applications for audio translation.